Studio

ROLE Product design lead

USER Government and enterprise users + internal staff who rely on assistive technologies.

TARGET METRIC 0 WCAG 2.2 AA violations

TIMELINE 6 months

Information

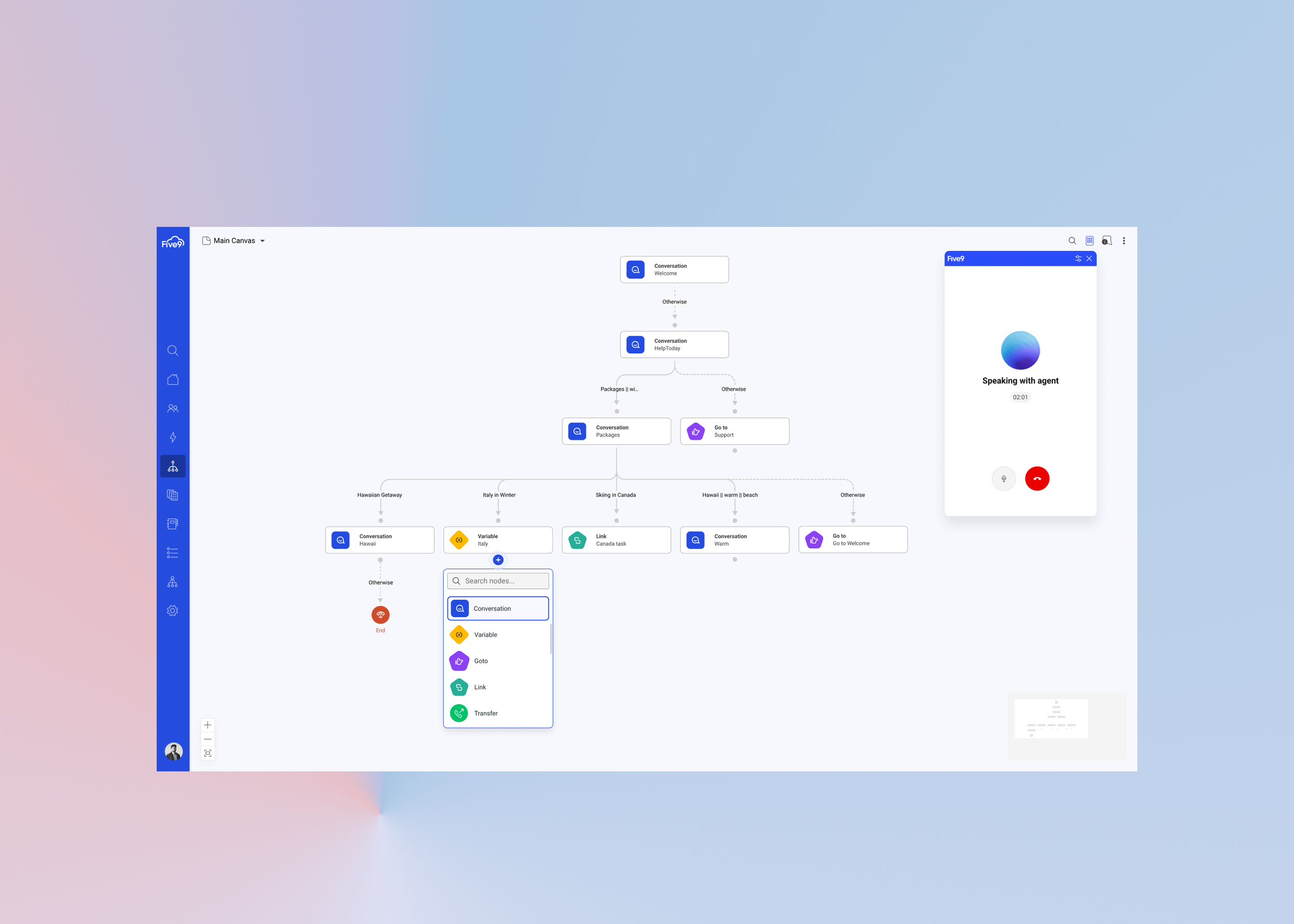

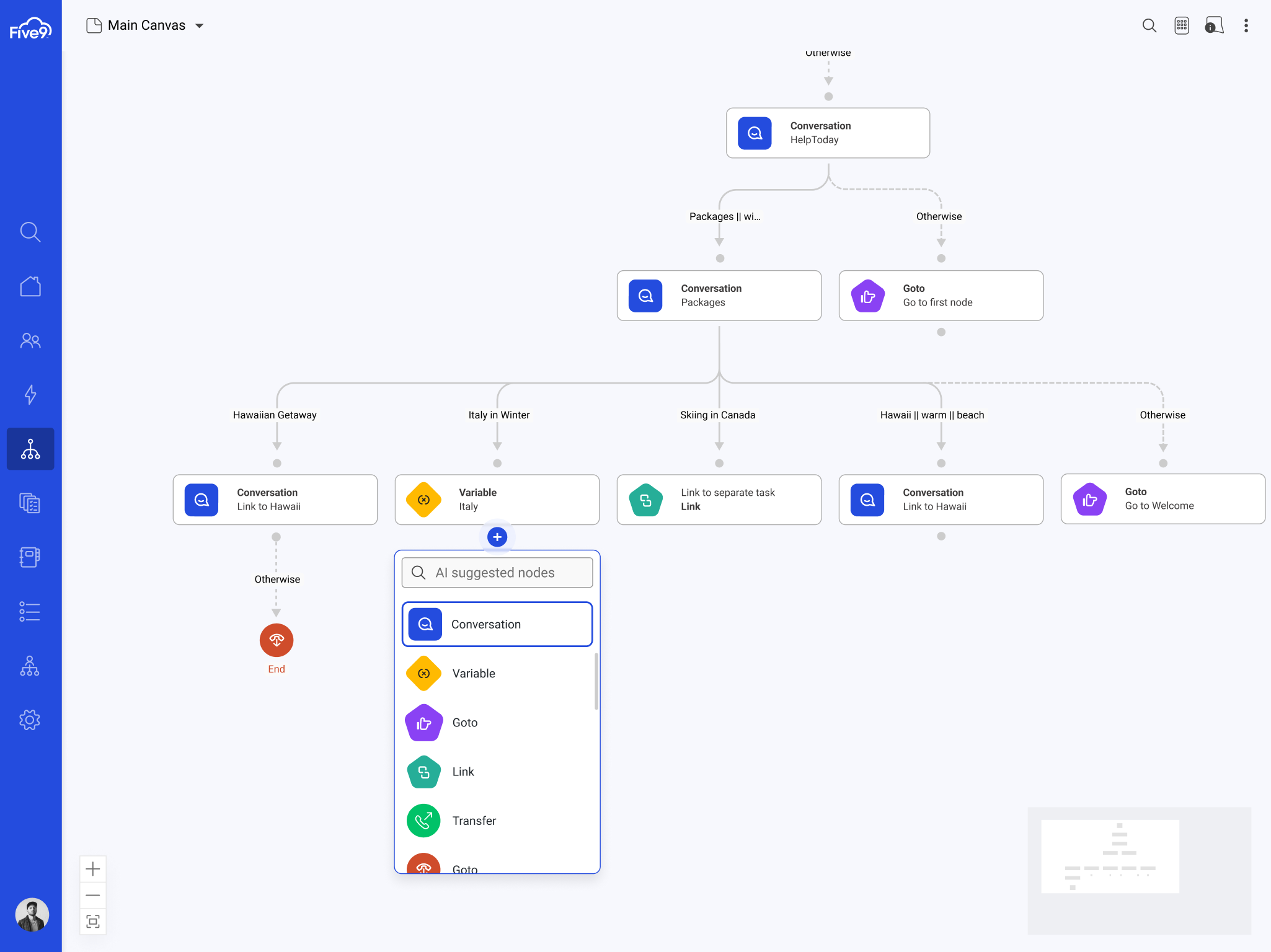

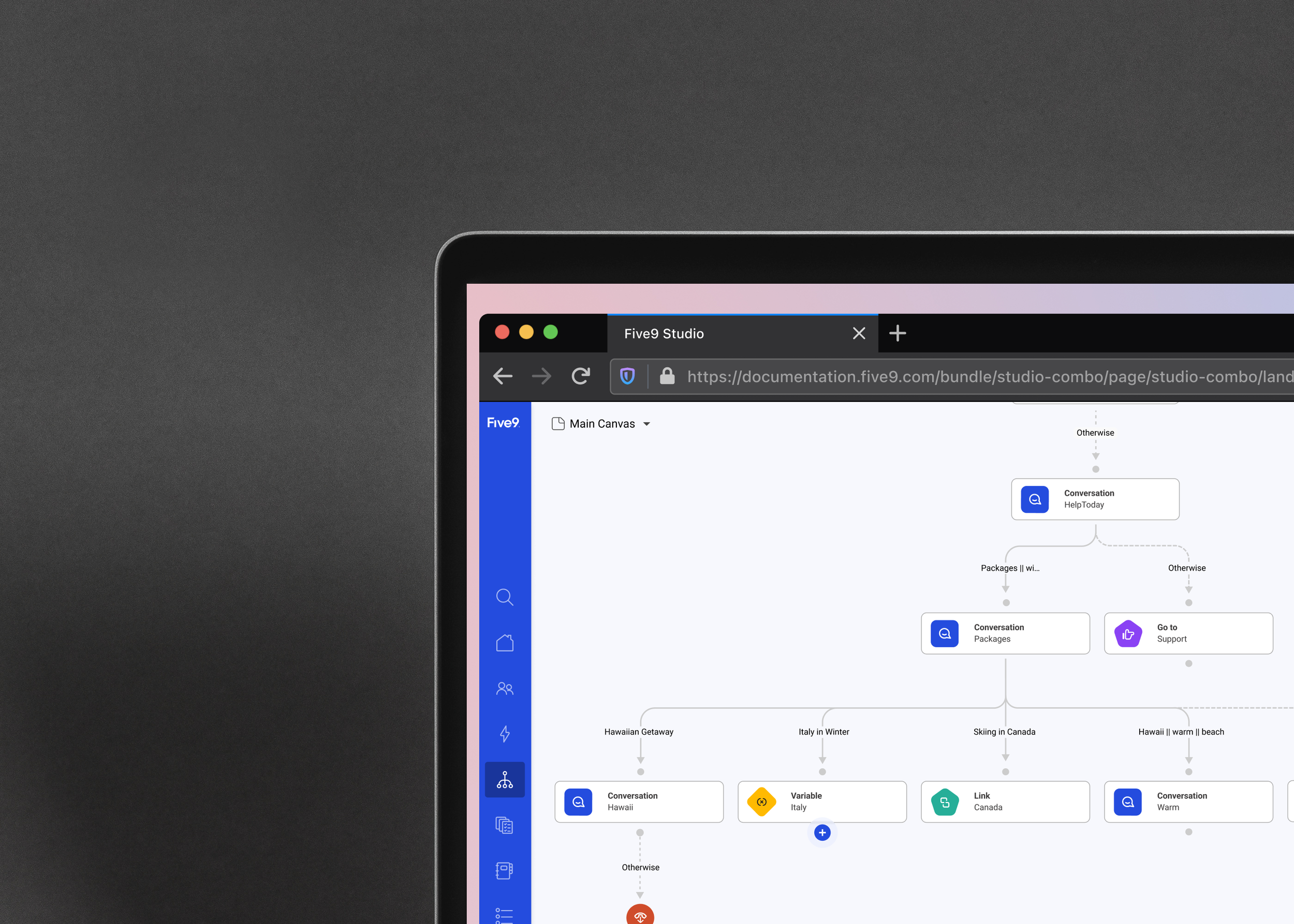

In 2024, Five9 set out to redesign its conversational AI flow-builder experience to meet the accessibility expectations of government agencies and other enterprise customers.

The flow builder is the core way users create and maintain virtual agent logic, yet the interface had not been meaningfully updated in years — and critically, it failed to meet accessibility (WCAG 2.1 AA) standards which was a blocker for government contract approvals.

Goals

Deliver an accessibility-compliant redesign of the agent-builder interface, ensuring the product passes formal audits and becomes eligible for high-value government procurement deals.

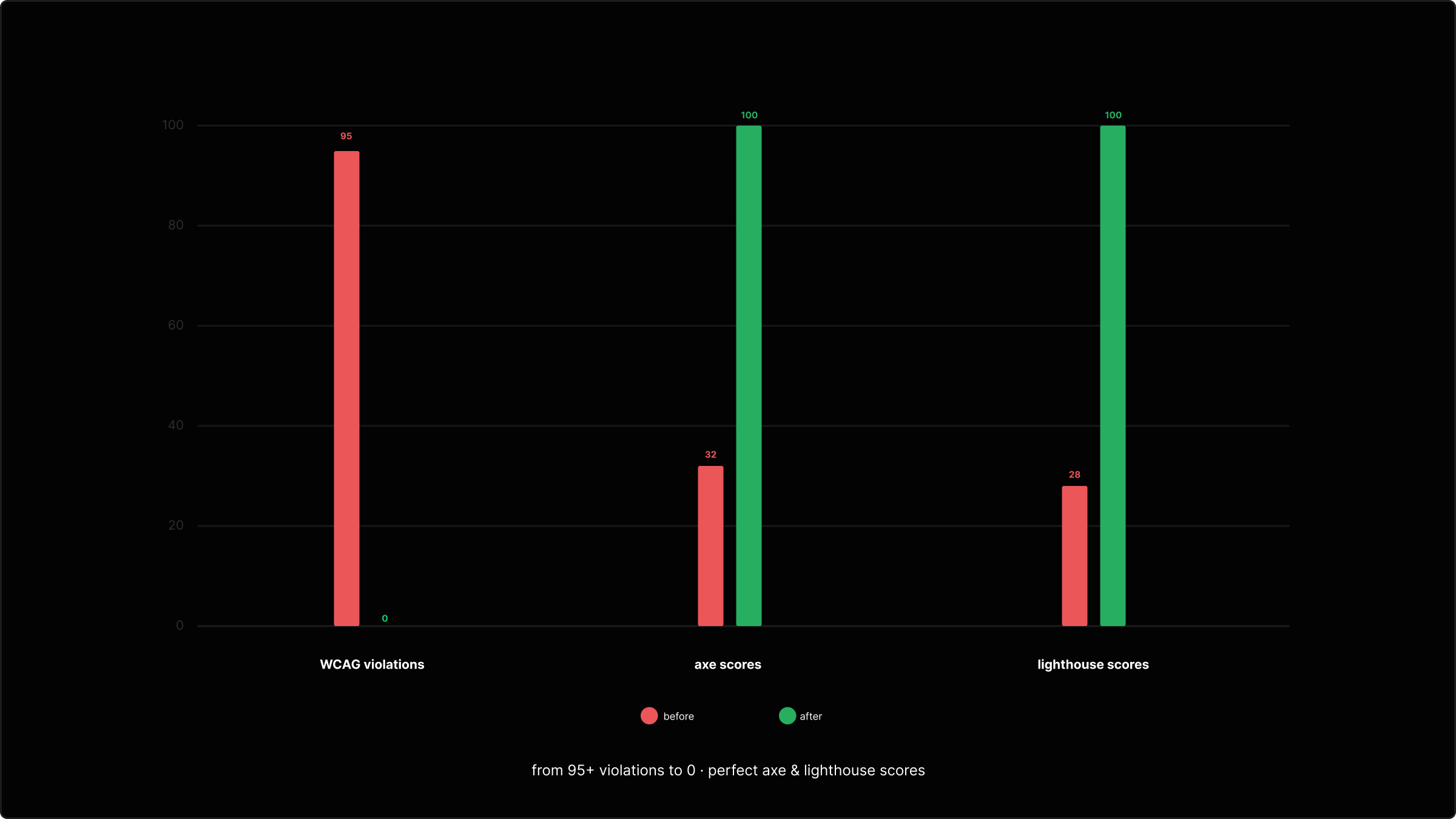

↳ 0 critical WCAG 2.2 AA violations in key flows.

↳ 100 / 100 scores on automated accessibility tools (Axe, Lighthouse, NVDA).

↳ Positive review from an Accessibility SME.

↳ No new accessibility blocker issues raised by QA at release.

Current design & baseline scores

NA

keyboard-only navigation support

NA

on-screen zoom controls

Limited

screen-reader support

95+

WCAG 2.2 AA violations

23/100

automated test scores

-$5B

in lost contract access

Insights

To understand why the existing experience was failing WCAG, I started with a deep inspection of the underlying implementation.

1. Technical + Codebase Audit

With the the front-end engineering team we reviewed the codebase:

Inspecting DOM structure, ARIA attributes, event handlers, and interaction patterns

Identifying reliance on a third-party org-chart library with no semantic markup

Validating that the library itself had no accessibility support and no active development, meaning fixes were not feasible

2. Heuristic Evaluation

With accessibility SME we ran a structured evaluation against WCAG 2.1 AA using:

Keyboard-only navigation tests

Screen reader testing (VoiceOver + NVDA)

Color, contrast, focus, and tab-order analysis

Interaction testing for motor-impaired users (gesture/mouse-dependent actions)

3. End-to-End User Interaction Mapping

Together with accessibility SME we mapped the full user journey through the flow builder using different assistive workflows:

Keyboard-only

Screen reader

Magnification

Alternative input devices

This revealed that even core tasks (selecting a node, creating a connection, zooming, etc.) were either impossible or required non-accessible gestures.

4. Engineering & Performance Profiling

In partnership with engineering, we stress-tested the builder with real enterprise-scale IVA flows We reviewed:

DOM node count at scale

Re-render patterns

Zooming / panning performance

Memory and CPU behavior As flows grew, the library degraded significantly

5. Cross-Functional Interviews & Failure Reports

I interviewed:

QA accessibility testers

Internal SMEs

Support teams who had received complaints from enterprise customers

PMs managing government-sector accounts

These conversations confirmed:

Government contracts were formally blocked due to accessibility failures

Enterprise customers had already escalated issues

Workarounds weren’t viable

6. Comparative Analysis + Benchmarks

I also benchmarked against:

Modern flow builders with accessible interaction models

Developer tools using semantic trees

Emerging best practices for accessible canvas-based interfaces

This reinforced that we couldn’t retrofit accessibility into the existing system.

This wasn’t a cosmetic problem; it was a structural accessibility and platform issue.

Exploration - Wireframes

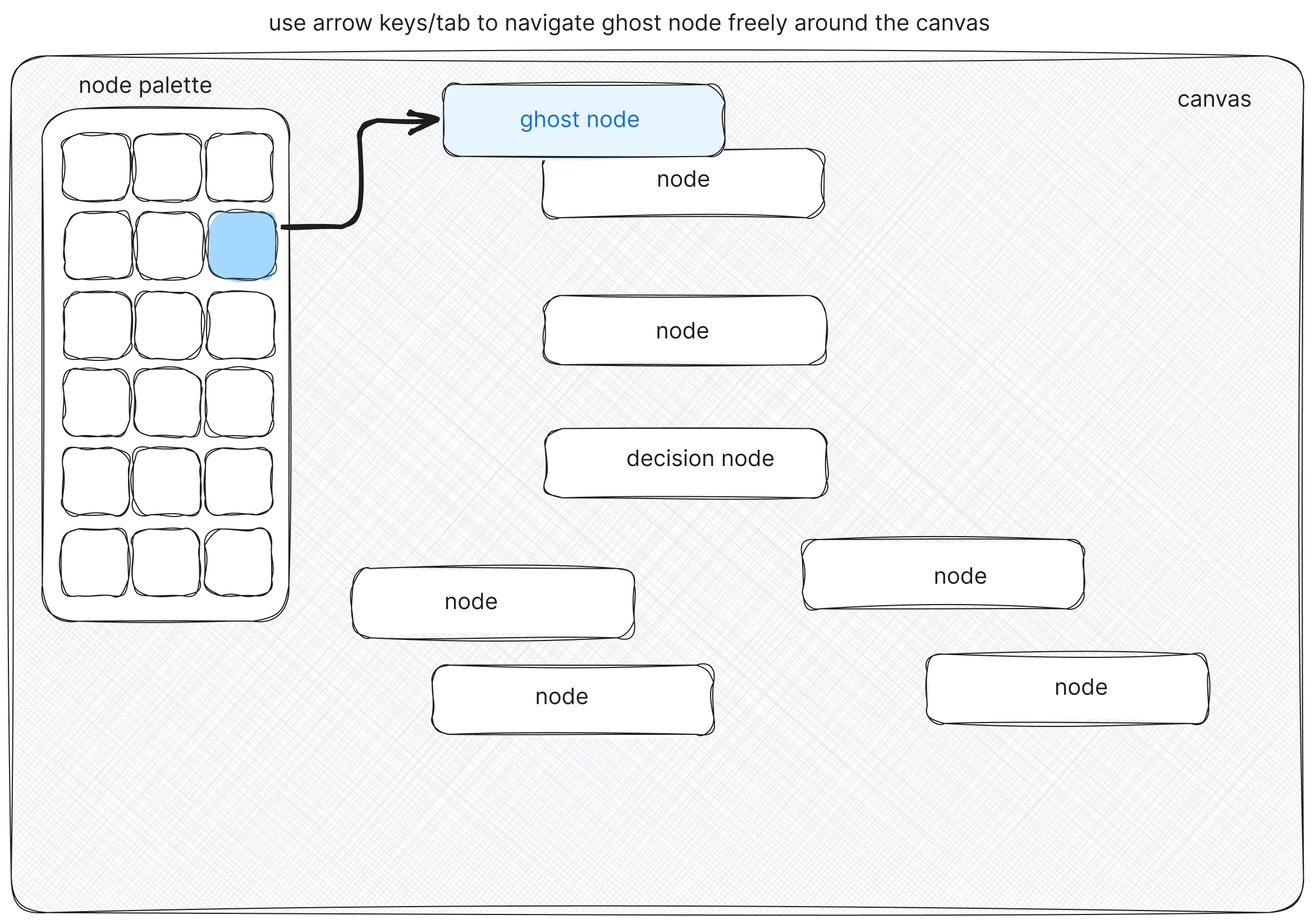

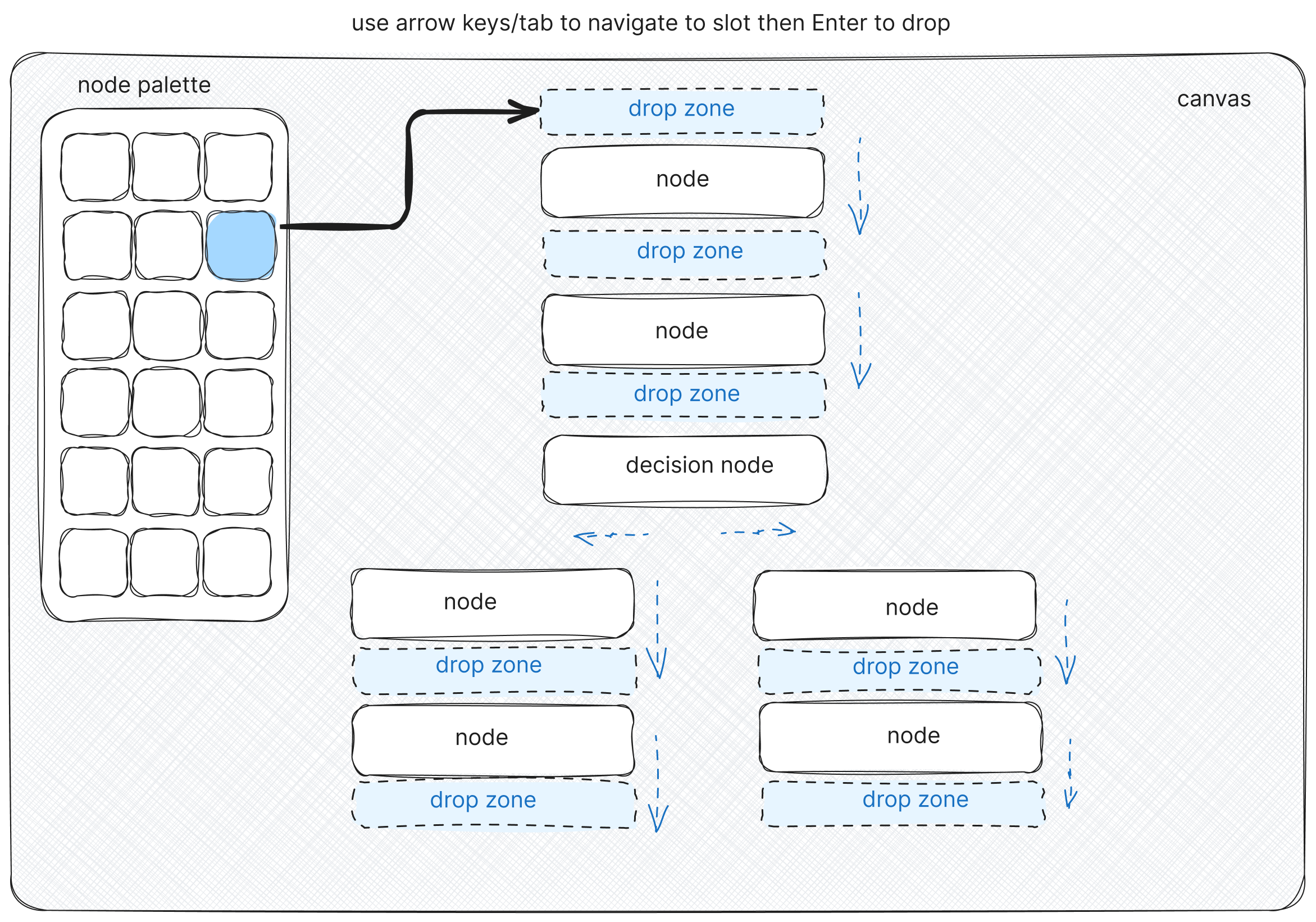

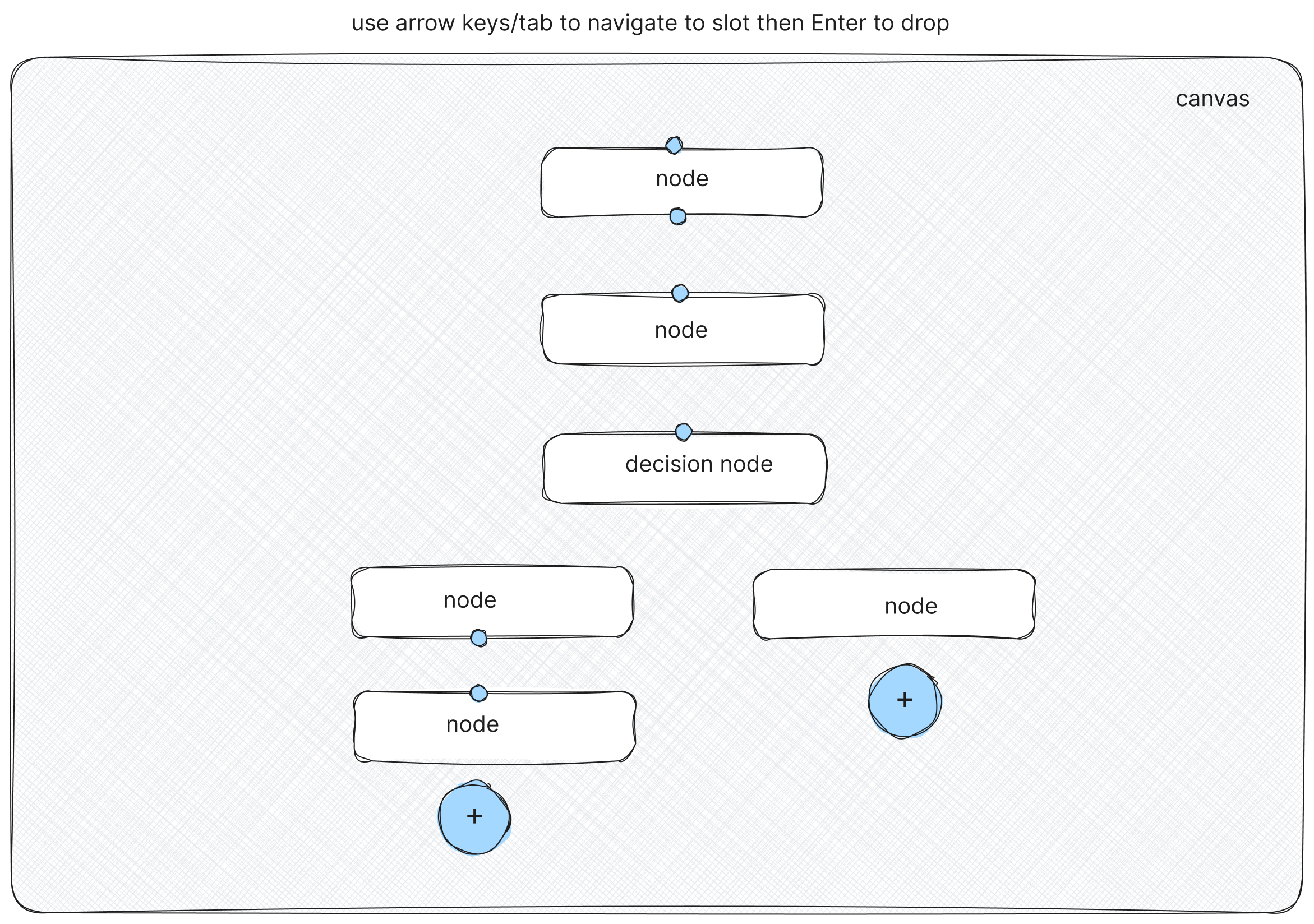

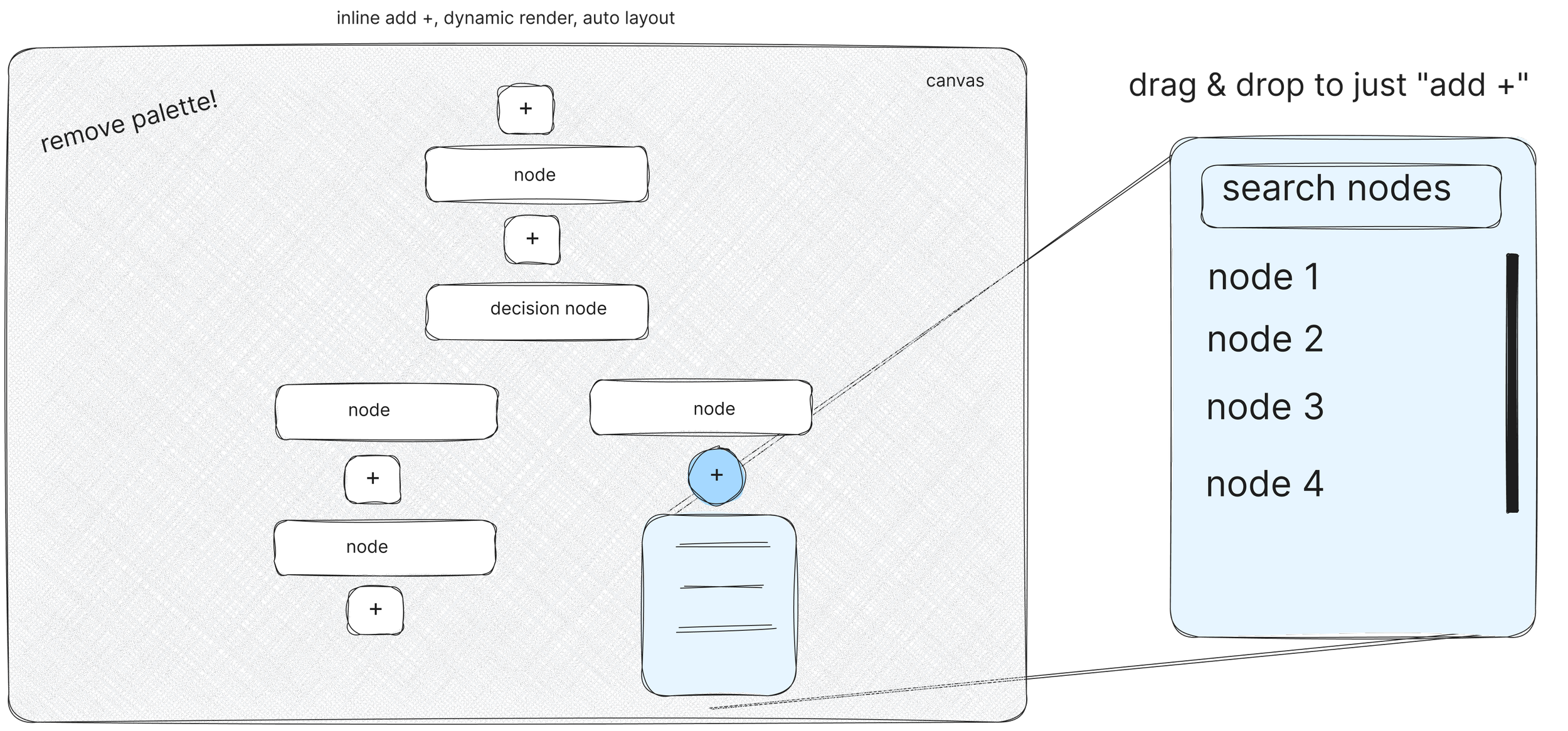

Even after rebuilding the frontend with a modern, accessibility-first foundation — React, semantic HTML, ARIA, and full keyboard support — one truth remained: drag-and-drop, by its nature, is not accessible.

Some patterns simply limit what’s possible, no matter how well they’re engineered.

Exploration - Interaction Sketching

Once I had an hypothesis for a keyboard-only drag&drop interaction I moved over to Figma Make (I was an early beta user) to carry out some interaction sketching.

One of the limitations of the standard ‘Tab’ input for keyboard-only navigation is you need to make a lot of assumptions about hierarchy and Tab ordering. For a typical website this is acceptable but for a completely visual flow builder I felt this would be too restrictive.

↳ I was able to test node navigation via the keyboard’s directional arrow keys to jump to the nearest node in any direction or axis.

↳ The esc key would exit the ‘node mode’ and enter ‘canvas mode’ to allow the entire canvas to drag and pan via the same arrow keys.

↳ The tab key from ‘canvas mode’ would enter ‘node mode’ at whichever node was closest to the center of the current view port.

↳ The + - keys were also mapped to canvas zoom controls as well as the addition of on-screen zoom controls.

Testing & validation

Because of budget and time constraints we could not run qualitative testing with real assistive-technology users. We relied on tools + SME review, with a clear plan to add user research later. Even so, we were able to remove all violations and obtain perfect scoring on automated tests.

Tools used

Automated: Axe, Lighthouse

Assistive tech: NVDA, VoiceOver

Manual: Keyboard-only navigation checks

Full

keyboard-only navigation support

Yes

on-screen zoom controls

Full

screen-reader support

0

WCAG 2.2 AA violations

100/100

automated test scores

$5B+

in potential NEW contracts

If I had two more weeks..

↳ Run usability sessions with 3–5 users who rely on screen readers or keyboard navigation to validate the new flows.

↳ Iterated on focus order, announcements, and error messaging based on observed behavior, not just tool output or my assumptions.

↳ Expanded the accessibility work to more edge cases and secondary flows in the builder (e.g., advanced settings, rarely used dialogs).

↳ Created a playbook for other teams at Five9 to apply the same approach to their products.

This case study highlights a focused 4-week effort within a broader multi-month initiative to rebuild Five9’s Virtual Agent Builder with accessibility at the core. I led the accessibility-first redesign, partnering closely with engineering, QA, Professional Services, and our Accessibility SME to replace an inaccessible legacy interface with a fully WCAG 2.2 AA–compliant experience.

What’s shown here represents a small but important part of the larger platform modernization work—new interaction patterns, accessible components, and system-level foundations that now guide future product development across Five9.